As necessary as conventional quantitative methods might be, the importance of adopting a mixed-methods approach in order to understand and answer complex development questions cannot be overemphasized. This blog is the second of a two-part series by 3ie Senior Research Fellow Michael Bamberger in which he offers detailed guidance on how to design, implement and utilize mixed-methods evaluations. Click here to read the first part in which he explains why an integrated mixed-methods approach is more effective for addressing real-world problems.

Mixed-methods approaches can be applied at all stages of an evaluation and not just during data collection. They seek to combine the strengths of both quantitative and qualitative evaluations – having different purposes and methods when used by researchers working within either of these dominant traditions. While there is no standard mixed-methods approach, a wide range of tools and techniques can be adapted to the specific context of each evaluation.

Many mixed-methods evaluations will have three components: a qualitative, a quantitative, and a mixed-methods component that synthesizes the other two.

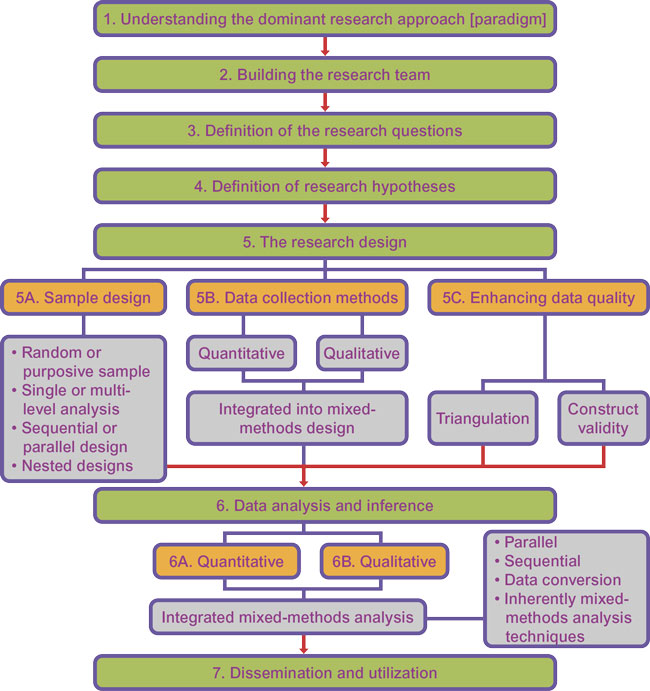

Designing, implementing, and utilizing mixed-methods evaluations requires the following key steps. (Figure 2.1)

Step 1: Understanding the dominant approach of the researchers

Mixed-methods approaches are used quite differently when used to complement a quantitative-dominant evaluation compared to a qualitative-dominant approach (section 2.1):

- For quantitative-dominant evaluation (research), mixed methods are often used to provide a deeper understanding of the social and political context within which a program operates, the organizational processes, behavior, and motivations, and to explain why, how, and for whom intended outcomes were achieved (or not achieved).

- For qualitative-dominant evaluations, mixed methods are often used to enhance the representativity and generalizability of the findings (how much change, for whom, and the statistical significance).

Figure 2.1 The mixed-methods evaluation strategy

Step 2: Building the research team

Conducting a mixed-methods evaluation will often require the research team to broaden the range of research expertise. Over the short run, this often means sub-contracting the parts of the research with which the team is not familiar or contracting a consultant. Over the longer run, the research team may consider hiring staff with the required expertise or who can provide the capacity development required by current staff members. When bringing in consultants or sub-contracting parts of the study, it is important to incorporate the new research expertise early in the research planning to allow time for each group to become familiar the approach of the other and to understand how the research design must be adapted to fully benefit from the integrated approach. However, it is commonly the case that the evaluation is designed before the consultants are brought in and they are asked to implement a certain number of research activities that have already been finalized. While this latter scenario can be considered as a multi-method evaluation (with parallel research strands), it is not a true mixed-methods design and much of the potential synergy is lost.

Additionally, fully incorporating the new team members requires strong team leadership and time for team-building.

Step 3: Defining the research questions

For understanding the program being studied and the key research questions, four main approaches are combined under mixed methods. These include: a review of secondary data sources, factoring in experience from earlier projects, and interviews with stakeholders (and key informants). The fourth one – diagnostic studies – helps understand the economic, political, socio-cultural, historical, and other relevant dimensions of the broader context within which the program operates (or will operate).

Diagnostic studies is a key feature of the mixed-methods approach, which emphasizes that development issues are multi-dimensional and that different stakeholders have different perspectives, expectations, and priorities. Furthermore, it considers the interaction between sectors – so, for example, an education program will be influenced by the status of health, transport and infrastructure, and agriculture, among others. This approach involves spending significant amounts of time visiting the affected populations to understand their experiences and perspectives, the history of the community, and their expectations from and about the proposed project.

Step 4: Defining the research hypotheses

Quantitative research hypotheses are normally derived deductively from existing theories often supported by literature reviews. In contrast, qualitative hypotheses are usually derived inductively based on observations in the field (section 2.2). So, while quantitative deductive hypotheses are usually defined at the start of the evaluation before data collection begins, qualitative inductive hypotheses evolve as data is collected and there is a better understanding of the issues being studied. A strength of the deductive approach is that the hypotheses are clearly defined at the start of the study so that sample design and data collection can be designed to permit rigorous testing of the hypothesis. The limitation is that the evaluation is implemented without a full understanding of the specific project context. The mixed-methods approach permits the use of a three-stage hypothesis development:

- Stage 1: diagnostic study to provide a deep understanding of the context and generate an inductive hypothesis that captures the unique characteristics of the program context (section 2.3)

- Stage 2: expanded deductive hypothesis that incorporates the in-depth understanding provided by the inductive hypothesis

- Stage 3: updated inductive hypothesis that can be incorporated at a later point in the evaluation

Step 5: The research design

5A Sample design

There are four sampling decisions in a mixed-methods design:

- Is the sample design random or purposive? (section 2.4)

- Is the study conducted at a single level (e.g., the community level) or at several levels (e.g., community health centers and district health offices)?

- Is the design sequential (quantitative and qualitative designs are used one after the other) or parallel (both used simultaneously) (section 2.5)?

Sequential designs are the most common as they are logistically easier to manage (it is very difficult to simultaneously coordinate two different studies at the same time). However, triangulation during data collection, to test the reliability and validity of the data collection process, is an example of a parallel mixed-methods design. - Is there a use for nested designs? (section 2.6)

5B Data collection methods

Mixed-methods studies normally combine conventional quantitative and qualitative data collection methods (section 2.7) with the integration of both into mixed-methods data collection.

5C Enhancing data quality

A priority of mixed methods is to combine different tools to enhance data quality. This is done through triangulation and using techniques such as diagnostic studies, in-depth interviews, participant observation to understand the context within which indicators are used.

Step 6: Data analysis and inference

Mixed-methods data analysis includes three components:

- Qualitative analysis: the main types are categorizing, contextualizing, theming and comparing, and displaying (section 2.8)

- Quantitative analysis: the main types are descriptive versus inferential analysis, and parametric versus non-parametric

- Integrated mixed-methods analysis: where the above two are incorporated

The basic models of integrated mixed-methods analysis are:

- Parallel analysis: when the quantitative and qualitative data sets are analyzed independently and the findings of one do not affect the design of the other. In this case, the analysis of the linkages between the quantitative and qualitative findings is done at the interpretation stage

- Sequential analysis: when the findings of one part of the analysis determine the structure of the analysis of the other part (section 2.9)

- Conversion: when qualitative data is converted (“quantized”) into a metric that permits statistical analysis. One common approach is the use of rating scales (section 2.10), another is the use of non-parametric statistics such as Chi-square. Similarly, quantitative data is classified (“qualitized”) into descriptive categories that can be used to select case studies or for other descriptive purposes

- Outlier analysis is used to study cases falling outside of the normal pattern of responses (section 2.11)

- Multi-level mixed data analysis: when data is organized into levels (e.g., household, village school, school district). The data is analyzed separately for each level and then patterns between levels are identified.

- Fully-integrated mixed-methods data analysis: when inherently mixed-methods analysis techniques (Tashakkori et al 2020) are used. These have been developed specifically for mixed-methods analysis (section 2.12)

- Cross-over analysis: when analytical techniques from one tradition are applied to the other [Section 2.13] These kinds of analysis do not just refer to switching between quantitative and qualitative analysis, but also between different traditions within each approach.

The interpretation of the findings and inference from mixed-methods analysis combines the different approaches used for assessing threats to validity and adequacy of quantitative and qualitative data analysis (Bamberger and Mabry 2020 Chapter 7).

Step 7: Presentation, dissemination and use of mixed-methods evaluations

A number of approaches to presentation, dissemination and use are emphasized in mixed methods evaluations, according to which an evaluation must capture the perspectives and voices of a wider range of stakeholders – often not the case in other evaluations. All these perspectives must be represented in the evaluation findings – which should reflect the multiple perspectives and not try to present a single perspective, usually of the funding agency or a government office. For example, while the construction of a road through a village may be estimated to have a positive economic rate of return, it may be negatively evaluated by some residents, particularly mothers, concerned for the safety of their children, who can no longer walk around the village on their own. The mixed-methods evaluation findings must include both perspectives. Before finalizing the report, appropriate mechanisms should be included to ensure feedback is obtained from a wide range of stakeholders, who will require the findings to be disseminated in different ways. While some may expect a formal written report, many community-level and indigenous groups may prefer a discussion in a community meeting or the use of posters, or in some cases community theater or dance. Finally, recommendations on utilization must take into consideration the broader political, economic, and socio-cultural environment.

Hi,

A very informative blog. Came to know about a new terminology 'inductive hypothesis'. Would have been great if more clarity was provided on the term by providing any external link.