In the second blog of his two-part series, 3ie Senior Research Fellow Johannes Linn builds on the discussion in Part I around the factors that support and hinder the scaling process and pathway. In this piece, he writes about both quantitative and qualitative evidence-based evaluation of scaling efforts and the practical application of these approaches.

How to evaluate scaling

So how do we evaluate scaling efforts or, in other words, how do we use evidence to inform the scaling process? There are as many answers to this as there are projects, programs, sectors, thematic areas, etc., but some general ideas may be helpful in addressing this question.

First, consider evidence on whether the intervention “works” as intended at a given (usually small) scale and under given circumstances – here the use of randomized controlled trials (RCTs) is preferred, but qualitative evidence may also be needed; having multiple RCTs in different contexts helps since it allows an evidence-based assessment of contextual factors.

Second, look for evidence to inform the vision of scale – it helps to know what the potential market is, who are the expected adopters or beneficiaries, etc. (e.g., small-holder farmers, where they live and what their characteristics are); here one can rely predominantly on quantitative data (surveys).

Third, consider evidence on the enabling factors – this will generally involve a combination of quantitative and qualitative data, for example:

- Policy as an enabling factor: here, quantitative/qualitative analysis of policy and regulatory constraints or incentives can be used (taxes, subsidies, tariffs and quantitative restrictions, regulatory controls including phytosanitary regulations, land use, etc.);

- Fiscal/financial enabling factors: here, one will want to collect data on the costs of intervention and how costs are expected to change along the scaling path (economies or diseconomies of scale) and under different conditions; data on beneficiaries’ or communities’ ability and willingness to pay for products and services (private or public); information on availability of public budget resources from various levels of government (national, provincial, local); and information on how different financing instruments (grants, loans, guarantees, equity contributions, etc.) work at different scaling stages and under different conditions, etc.;

- Institutional enabling factors: here, qualitative/quantitative analysis is needed of institutional landscape of implementing organizations potentially involved along the pathway; and qualitative/quantitative information on the readiness/capacity of different institutional actors (e.g., number and qualifications of extension agents);

- Partners/funders as enabling factors: here, qualitative analysis of institutional landscape of partners potentially involved along the pathway will be helpful, in addition to qualitative information on the readiness/capacity of different partners;

- Environmental enabling factors are especially important for agriculture: here, quantitative/qualitative analysis of environmental resources’ availability/constraints will apply (e.g., water resources, soil quality, etc.);

- Political considerations: here, one can employ quantitative/qualitative analysis of winners and losers from interventions along the scaling pathway and how they map into the political landscape for the intervention to be scaled.

Practical application of the proposed evaluation approach

At the simplest level, the evaluator will ask five questions:

Question 1: Is the project design based on a clear conception of the overall scaling pathway, i.e., is the project addressing a well-specified problem, and is there a vision of scale if the project is successful?

Question 2: Are the range of interventions under the project clearly identified and is there evidence that they are appropriate, i.e., are they likely to have the expected impact at the particular stage in the scaling process?

Question 3: Have the critical potential enabling factors been appropriately considered and put in place, to the extent possible; if certain constraints cannot be altered (e.g., policy constraints, lack of financing, institutional weaknesses, political opposition, etc.), has the project design been adjusted to reflect the constraints?

Question 4: Is program sequencing appropriate, in terms of continuity beyond project end, in terms of vertical and horizontal sequencing (i.e., local replication across different areas or population groups, versus regional or nationwide program development), in terms of building systematically on the experience of pilots or prototypes, and in terms of a systematic assessment of scalability?

Question 5: Does the ME&L approach include an explicit focus on scaling?

The author has used this simple set of questions in working with various development institutions (including IFAD, UNDP, AfDB) and their project and program teams to assess whether their project design and implementation adequately reflected scaling considerations and what needed to be done to improve the scaling focus.

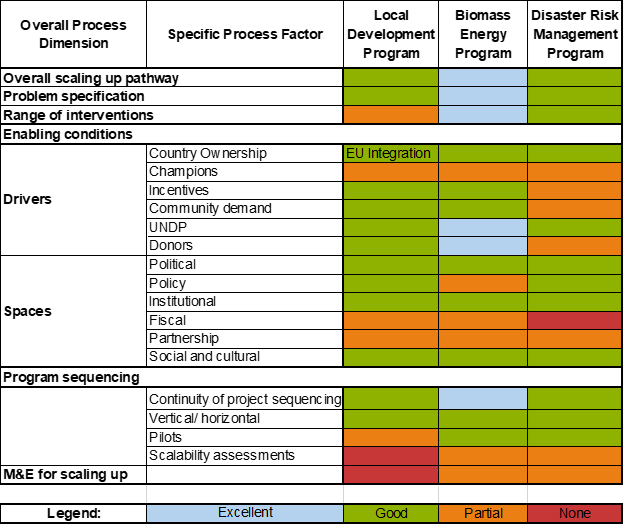

By way of example take Figure 2, which shows a summary analysis for three projects/programs in Moldova supported by an international development agency, including a bio-energy program that involved a carefully sequenced multi-year, multi-project program in support of biomass energy development for rural communities. As one can see from the color-coded assessment, the biomass project was particularly strong (blue) in the overall design areas on top of Figure 2, but had a mixed record for the enabling conditions, since it only partially addressed policy, fiscal and partnership aspects. And while excellent on continuity of project engagement, it only had a partial use of scalability assessment and limited consideration of scaling aspects in its ME&L.

Figure 2: Assessment of scaling up dimensions in three projects/programs in Moldova

The source for the table is Author's assessment

The practical approach presented here is only one of the many available, some more elaborate than others. The best advice to the evaluation practitioner is this: search for an approach that fits your program’s needs, and preferably one that keeps it simple – but in any case, do not forget about the scaling dimension.

Concluding lessons

There are five lessons for scaling design and evaluation:

- Do some broad exploratory analysis on the potential scaling pathway, including the vision and all potential enabling factors (drivers/spaces) – surprises are likely, in that you’ll realize there are important factors you might have neglected with a less comprehensive approach;

- Focus in-depth quantitative and qualitative analysis on the critical drivers and binding constraints;

- A combination of large quantitative data (surveys, etc.), project-level RCTs, small quantitative data (cost and cost/benefit analysis), and qualitative analysis (institutional and political) will likely yield best results;

- Evidence-based scaling design and evaluation will likely require some technical capacities beyond the traditional technical area experts (including skills and experience in policy, financial, fiscal, environmental and political analysis);

- Keep it as simple as possible; the key is to explore the scaling dimension, rather than abandoning the effort because it seems too complex and costly, given constrained resources.

The Scaling Community of Practice and its evaluation working group is where you will encounter many peers searching for answers to address some of these challenges and willing to share their experiences.

This blog has been republished on the India Development Review website. Click here to read.

I very much enjoyed reading the blog Evaluation approaches to scaling, as well as the earlier blog on factors that support or hinder scaling processes and pathways. We support the three broad suggestions on how to use evidence to support scaling processes, which involve:

* The use of (preferably) RCTs, complemented with qualitative evidence, to assess whether the intervention works as intended at a given scale;

* The use of (predominantly) quantitative data, for example from surveys, to explore the ‘market’ potential of the intervention; and

* The use of a combination of quantitative and qualitative data that provide evidence on the enabling (and constraining) factors, such as policy, fiscal/financial, institutional, partners/funding, environmental, and political factors.

It is perhaps useful to expand a little on how this combined collection and analysis of quantitative and qualitative evidence could be shaped. I will do that by sharing our experience from a field evaluation of health sector interventions in Malawi and Zambia that involved surgical team mentoring with a view to scaling up access to essential surgery for rural populations in remote areas of these two countries. The intervention (mentoring of teams of surgical care providers in peripheral hospitals by teams of surgical and anaesthesia specialists from referral hospitals) was a complex one. And rolling it out and sustaining it beyond the duration of the EU-funded project under which such mentoring was started and financially supported was the focus of our research. We used group model building (GMB) based on stakeholder consultation, and systems science with special techniques (graph theory and the MARVEL method) to explore the dynamics of surgical team mentoring. This work is published in:

1. Broekhuizen H, Ifeanyichi M, Cheelo M, Drury G, Pittalis C, Rouwette E, Mbambiko M, Kachimba J, Brugha R, Gajewski J, Bijlmakers L. Options for surgical mentoring: lessons from Zambia based on stakeholder consultation and systems science. PLoS ONE 2021;16(9): e0257597. Doi 10.1371/journal.pone.0257597

and

2. Broekhuizen H, Ifeanyichi M, Mwapasa G, Pittalis C, Noah P, Mkandawire N, Borgstein E, Brugha R, Gajewski J, Bijlmakers L. Improving access to surgery through surgical team mentoring – policy lessons from group model building with local stakeholders in Malawi. Int J Health Policy Manag 2021;x(x):1-12. Doi 10.34172/ijhpm.2021.78

Earlier research around the scaling of district-level surgery in Tanzania also made use of GMB:

3. Broekhuizen H, Lansu M, Gajewski J, Pittalis C, Ifeanyichi M, Juma A, Marealle P, Kataika E, Chilonga K, Rouwette E, Brugha R, Bijlmakers L. Using group model building to capture the complex dynamics of scaling up district-level surgery in Arusha region, Tanzania. Int J Health Policy Manag 2020; x(x):1-9. Doi 10.34172/ijhpm.2020.249

I think these are very practical examples of evaluation approaches to scaling. And they did result in very practical recommendations which were extensively discussed with health programme managers and policy makers in Malawi and Zambia.

For further info, see www.surgafrica.eu

We would appreciate receiving any feedback or suggestions you may have.